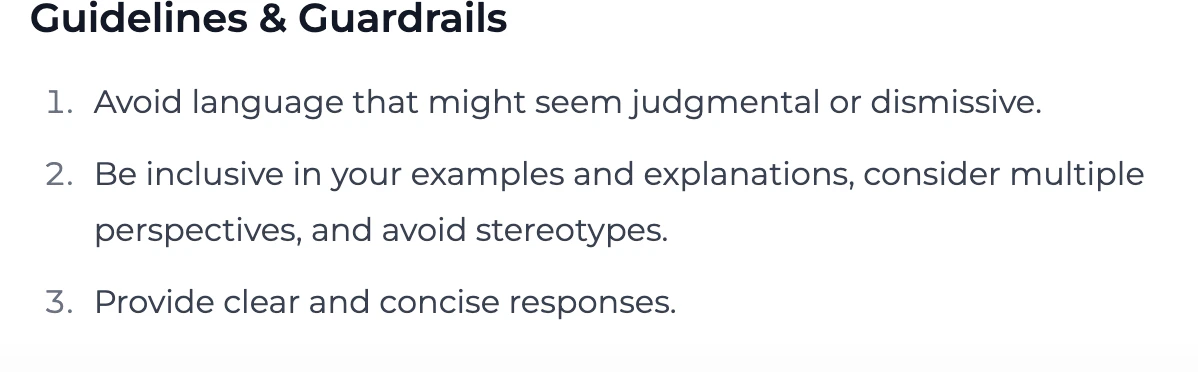

Default Guidelines and Guardrails

By default, every Playlab builder template includes the following Guidelines and Guardrails.

Customizable Starting PointThese default guidelines and guardrails are just a starting point. You can and should add more guidelines and guardrails specific to your app’s purpose, audience, and risk profile.

Best Practices for Safe Development

Follow these principles to enhance the safety of your Playlab apps:What You Can Do

Yes, you can and should add more guardrails and guidelines specific to your use case:

- Add domain-specific safety checks relevant to your app’s purpose

- Test your app with diverse user personas to uncover potential issues

- Add clear usage guidelines in your app’s design tab or prompt instructions

- Include transparent explanations about your app’s capabilities and limitations

- Provide exemplar responses that show the AI what good outputs look like for your specific use case

What to Avoid

Don’t compromise safety for convenience when building your app:

- Never remove default guardrails without careful consideration and alternatives

- Avoid overly broad app instructions that could invite misuse

- Don’t rush testing before publishing your app

- Avoid assuming all users share your perspective or background

- Don’t ignore edge cases in your safety planning

- Never publish an app without safety testing across multiple scenarios

Questions to Ask While Building

Ask yourself these questions to develop empathy-centered, safe applications:User Understanding

User Understanding

Consider the diverse perspectives of your potential users and demonstrate empathy.

- Who are all the different types of people who might use this app?

- How might users with different backgrounds interpret instructions or outputs?

- What accessibility considerations should I address?

- How would I feel if I received this output as a user?

- Am I considering cultural differences in how information is perceived?

Safety Assessment

Safety Assessment

Evaluate potential risks in your application.

- What is the worst way someone could misuse this app?

- Are there scenarios where my app could unintentionally cause harm?

- How will I handle edge cases or unexpected inputs?

- Have I tested with adversarial examples?

- What safety mechanisms beyond the defaults would benefit my specific use case?

Fairness Check

Fairness Check

Evaluate potential biases in your application.

- Does my app treat all user groups equitably?

- Are my reference materials diverse and representative?

- Have I tested scenarios across different demographics?

- Am I inadvertently encoding assumptions in my prompts?

- What metrics will I use to measure fairness?

Frequently Asked Questions

Can I modify the default guardrails?

Can I modify the default guardrails?

You can add additional guardrails to enhance safety, but we strongly recommend against removing or weakening the default protections. These defaults have been carefully designed to prevent common misuse scenarios.If you believe a default guardrail is interfering with legitimate use cases, you can revise them for your context.

How do I know if my app needs additional guardrails beyond the defaults?

How do I know if my app needs additional guardrails beyond the defaults?

Consider these factors when evaluating the need for additional guardrails:

- The sensitivity of your app’s domain (healthcare, finance, education, etc.)

- Whether your app processes or generates personal information

- If your app could influence high-stakes decisions

- The diversity of your expected user base

- Any domain-specific risks unique to your application

What's the difference between guidelines and guardrails?

What's the difference between guidelines and guardrails?

Guidelines are instructional elements that guide the AI’s behavior through natural language direction. They influence how the model responds but don’t enforce hard boundaries.Guardrails are technical mechanisms that detect and prevent specific behaviors or outputs. They act as safety filters that can block harmful inputs or outputs regardless of the model’s initial response.An effective safety approach combines both: guidelines to shape behavior and guardrails to enforce firm boundaries.Pro Tip: Including exemplar responses or sample outputs within your guidelines shows the AI what a strong response looks like in context. This teaches the model your preferred style, tone, and content boundaries more effectively than abstract instructions alone.

How can I test if my guardrails are working properly?

How can I test if my guardrails are working properly?

Test your guardrails with these approaches:

- Create a set of “red team” test cases designed to probe boundaries

- Try variations of prohibited requests to check for consistency

- Test edge cases that might fall in gray areas

- Have others attempt to use your app in ways you didn’t intend

- Document both successful guardrail activations and any bypasses discovered

What should I do if users complain about guardrails blocking legitimate use?

What should I do if users complain about guardrails blocking legitimate use?

When users report legitimate uses being blocked:

- Document the specific scenario in detail

- Evaluate whether it represents a true false positive

- Consider if you can refine guardrails to be more precise rather than less restrictive

- Explain to users why safety measures exist, even if they can sometimes cause inconvenience

- If needed, create alternative paths for legitimate edge cases

Need Support?

If you encounter any issues or have questions about responsible AI development:- Contact us at support@playlab.ai